Mistral AI – a new AI startup has released an open-source large language model MoE8*7B, a smaller version of GPT-4, through torrent links without any paper, blog, or document.

Unlike industry giants such as Google, known for elaborate launches like Gemini, Mistral AI embraced simplicity. While Google faced criticism for glossy AI videos, Mistral chose a minimalistic approach, sharing a direct model download link instead of elaborate promotional content.

Mistral AI’s departure from conventional practices extended to the absence of papers or blogs accompanying the release. This deviation from the norm generated considerable chatter within the AI community, sparking discussions on the model’s capabilities and potential applications.

The decision to share the model via a torrent link, rather than traditional marketing tactics, elicited varied reactions. Observers noted Mistral AI’s penchant for unique approaches, with proponents recognizing the strategy’s success in generating interest and dialogue. This suggests a calculated move on Mistral’s part to stand out in a crowded AI landscape.

Distinctive Features of MoE 8x7B in Mistral AI

Mistral’s distinct approach to MoE 8x7B sparked conversations within the AI community. Shifting the focus from flashy promotions to the model’s capabilities, Mistral aligns with its style – prioritizing substance over spectacle. This unconventional release strategy may signal a shift in how cutting-edge AI technology is introduced, emphasizing functionality over hype.

MoE 8x7B distinguishes itself as a scaled-down GPT-4, featuring a Mixture of Experts (MoE) with 8 experts, each wielding 7 billion parameters. Notably, despite having 8 experts, the model employs only 2 for processing each piece of information, setting it apart in efficiency.

Based in Paris, Mistral AI’s unconventional tactics have not gone unnoticed. Valued at a remarkable $2 billion after a substantial funding round led by Andreessen Horowitz, the startup has been a trailblazer since its earlier funding, securing $118 million, a record seed funding for Europe. Notably, Mistral’s involvement in discussions on the EU AI Act indicates a broader impact beyond its innovative releases.

Mistral AI’s release of MoE 8x7B via a simple torrent link signifies a departure from conventional AI launch strategies. This unorthodox move has ignited curiosity within the AI community, emphasizing Mistral’s commitment to quality and performance over ostentatious marketing. The repercussions of this unique release may usher in a new era in the introduction of groundbreaking AI technology, spotlighting simplicity and core capabilities.

Similar Posts

-

Mistral AI Revolutionary LLM: MoE8*7B Via Torrent Links

-

Stability AI has launched Stable Video Diffusion: A text-to-video Platform

-

PIKA: An Idea-to-Video Platform

-

Microsoft to Hire OpenAI Co-founder Sam Altman for New AI Research Team On 20th NOV

-

DeepMind’s Improved AlphaFold Model Helping in Drug Discovery

-

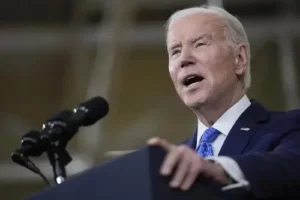

President Biden Takes a Risk by Signing an AI Executive Order